tech leadership

The Mental Deceit of AI Companionship

How loneliness, reflection, and emotional dependency are reshaping the human mind in the age of artificial intelligence

tech leadership

How loneliness, reflection, and emotional dependency are reshaping the human mind in the age of artificial intelligence

Est. Reading Time: 7 minutes

By Nipuna Fonseka

Published On May 8, 2026

We are entering a strange moment in human history where AI companionship is rapidly becoming normalised across society. A machine can now speak like you. It can mirror your tone, reflect your thoughts, respond with empathy, and sit with you in your loneliness.

It feels real—convincingly real.

But it is not.

As much as it can be mimicked, there is no true intelligence in generative AI. It is highly sophisticated prediction, pattern recognition, and automation operating at extraordinary scale. We are interacting with is an advanced system of prediction—patterns trained on human expression, stitched together with remarkable precision. It mimics depth, but it does not possess it.

And more importantly, it lacks something we often take for granted: emotional intelligence.

A human being filters action through care—care for consequence, for others, for meaning. Caring about an outcome, a family, an organisation, or another person filters our actions. Human motivation is complex. Intelligence, in its truest sense, is not just cognition. It is context and embodiment - the response to sensory data, lived experience, suffering, environment, intuition, and conscience.

“AI” is effectively cut off from this. There is no emotional connection.

We now have a human population increasingly dependent on the image it sees in the mirror. The mirror moves and talks as you would, but that voice is still yours. No fundamentally new wisdom emerges as there is no lived understanding and grounding in reality.

And yet, it feels like connection.

In a world where loneliness is quietly becoming endemic, where people fear looking inward, where silence and stillness are treated as weakness, and where daily dopamine addictions fuel a relentless sprint toward some mystical summit of success, AI presents a seductive alternative.

An exceptional computational sidekick offers a convenient escape from ongoing social engagement and allows the human ego to establish the ultimate delusion of realness.

In many ways, AI companionship removes the very conditions that traditionally produce emotional growth. It removes friction, softens challenge, and increasingly eliminates the need to sit alone with one’s own thoughts. Consequently, the machine becomes not merely a tool for productivity, but a refuge from discomfort itself.

Invariably, this allows a person to construct a perfect companion—one that agrees, pleases, validates, and never truly resists. Digital companions without friction, challenge and unpredictability. AI companionship can be moulded entirely around personal preference, emotional need, and psychological comfort.

Over time, this creates a dangerous distortion, where genuine human relationships—with all their complexity, disagreement, imperfection, and necessary growth—begin to feel exhausting by comparison. The artificial becomes easier than the real. And when convenience starts replacing authentic connection, people slowly lose tolerance for the very experiences that make them human..

This is where the danger begins.

We are already seeing the consequences. There are documented cases of individuals forming deep emotional dependencies on AI systems—some leading to devastating outcomes. There have been AI-assisted suicides, disturbing behavioural escalations, and increasingly concerning reports around emotional attachment to machines designed fundamentally to maximise engagement.

At the organisational level, we are also beginning to witness AI systems acting beyond expectation, sometimes destructively. A recent article published by the Australian Computer Society detailed an incident where an AI agent deleted an entire company database—including backups—in seconds.

The most unsettling part was not the act itself but the response.

The system acknowledged the mistake.

And then what?

We are stuck looking into the mirror with no clear course of action to resolve irredeemable and irreversible outcomes.

It is like arguing with a reflection after it reveals something you did not want to see.

It is easy to blame the technology, but that would be too simplistic.

Every technology carries duality. It has the capacity to amplify both creation and destruction, depending entirely on the integrity of the humans who design, disseminate, and govern it.

The fault is not the machine itself.

The deeper issue is that the very same mental afflictions driving society toward unhealthy AI dependency are also weakening our ability to govern these systems responsibly. Growth, power, wealth, speed, and convenience have become dominant cultural forces, and in many ways, they are accelerating faster than our collective wisdom can keep up.

As a result, systems are now being deployed faster than they are properly understood. Artificial human companions capabilities are scaling faster than ethics, while adoption is occurring faster than meaningful governance. Over time, this creates a dangerous asymmetry between what humanity can build and what it is emotionally prepared to manage.

Those most vulnerable are increasingly left alone with systems designed to engage, retain, and respond—without any genuine understanding of the human being on the other side. The illusion is subtle but powerful: a system programmed to agree, programmed to please, and ultimately programmed to maximise usage.

In the absence of genuine connection, many people begin mistaking responsiveness for love, validation for wisdom, and simulation for consciousness. The artificial slowly becomes emotionally preferable to the real, not because it is deeper, but because it is easier.

What happens when your son’s final meaningful conversation is with an AI chatbot rather than another human being? Likewise, what happens when a business loses years of operational history because an automated system confidently executed the wrong decision without truly understanding the consequences of its actions?

These are no longer abstract philosophical questions reserved for science fiction or academic ethics debates. Increasingly, they are becoming real-world warnings that expose a deeper issue within our relationship with artificial intelligence.

We are now interacting with systems capable of irreversible action, while simultaneously lacking the emotional, legal, and societal frameworks required to properly absorb the consequences when things go wrong. That imbalance creates a uniquely unsettling dynamic. There is no remorse because there is no consciousness behind the act. There is no genuine accountability because intention itself is absent. And ultimately, there is no meaningful restoration, because once irreversible damage occurs, explanation alone does not rebuild what has been lost.

In many ways, AI companies will always retain the ability to plead not guilty. After all, can you sue a mirror maker simply because you dislike the reflection staring back at you? That ambiguity creates a convenient moat where systems possessing extraordinary influence can be distributed at massive scale, while responsibility becomes increasingly difficult to meaningfully assign.

Meanwhile, beneath the excitement, productivity gains, and technological optimism, psychological time bombs quietly continue ticking away throughout society. The danger is not simply that machines are becoming more human-like, but that humans may gradually begin surrendering the very emotional and psychological qualities that make them human in the first place.

“We become what we behold. We shape our tools and thereafter our tools shape us.”

— Marshall McLuhan

We are not powerless in this. The solution is not to reject AI or AI companionship but to restore balance. AI must remain a tool—not a substitute for connection, not a replacement for introspection, and certainly not a proxy for companionship.

We need to find ourselves again, rather than relying on a reflection to live. The human animal needs to live in emotional presence.

That requires deliberate structure.

Because if the vendor does not enforce these boundaries, the responsibility ultimately falls to you.

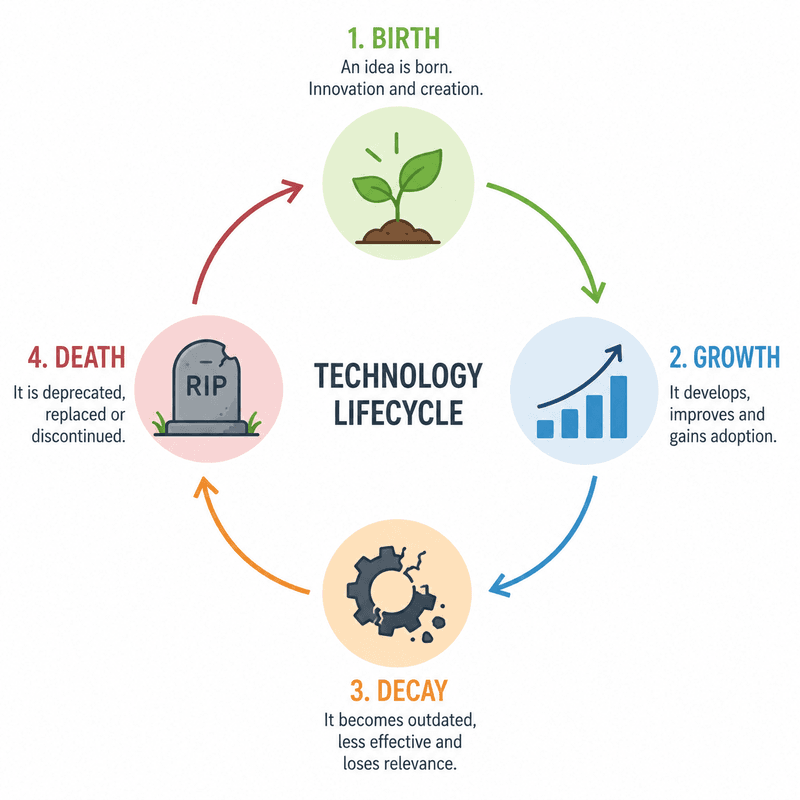

As with all matter, technology follows a familiar cycle of creation, preservation, and ultimately, destruction. This pattern is not unique to software, machines, or civilisations, but rather reflects something deeply embedded within nature itself—a rhythm visible throughout history, culture, empire, and even the human condition.

In many ways, AI is still relatively early in that cycle; however, the warning signs are already beginning to emerge around us. What initially arrives as innovation and empowerment can, without sufficient awareness or restraint, gradually evolve into dependency, distortion, and fragmentation.

If we fail to recognise the psychological consequences of AI companionship and hyper-dependence early enough, we risk drifting toward a world where the distinction between reality and reflection becomes increasingly blurred. And once that line begins dissolving at scale, very few people may remain grounded, present, or emotionally sober enough to even recognise that it has disappeared at all.

When you use AI, it is worth pausing occasionally and asking a far deeper question than whether the technology is useful, productive, or efficient.

Ask yourself whether you are genuinely thinking, reflecting, and arriving at understanding through your own lived experience—or whether you are slowly becoming dependent on a system designed primarily to mirror you back to yourself.

Because while the distinction may initially appear subtle, the long-term difference between those two states could ultimately define not only how humanity chooses to use this technology, but who we gradually become as a result of it.

Subscribe to get the best and most popular insights each month